In today’s digital age, file transfers are an essential component of many businesses’ operations, especially when working with large files. When integrating file transfers with an API into your business systems, it can be challenging to find a solution that meets all technical requirements. In our previous blog, we discussed the option of using a façade approach. In this blog post, we’ll explore the dual system approach which combines Managed File Transfer (MFT) and gateway functionalities to overcome this challenge.

The Dual System Approach

Combining file transfers with API functionality can be a challenging task. In scenarios where file transfers and API functionality need to be combined, you may encounter situations where neither your Managed File Transfer (MFT) solution nor your gateway solution is able fully meet the technical requirements for the integration.

This can happen in a scenario where the transmitted files are large enough to cause performance issues on your gateway, combined with API logic that cannot be easily handled in an MFT solution. To address this, a technical solution is needed that can combine MFT and gateway functionalities and correlate activity across both systems. However, keep in mind that there can be additional security considerations that may not be part of the usual setup.

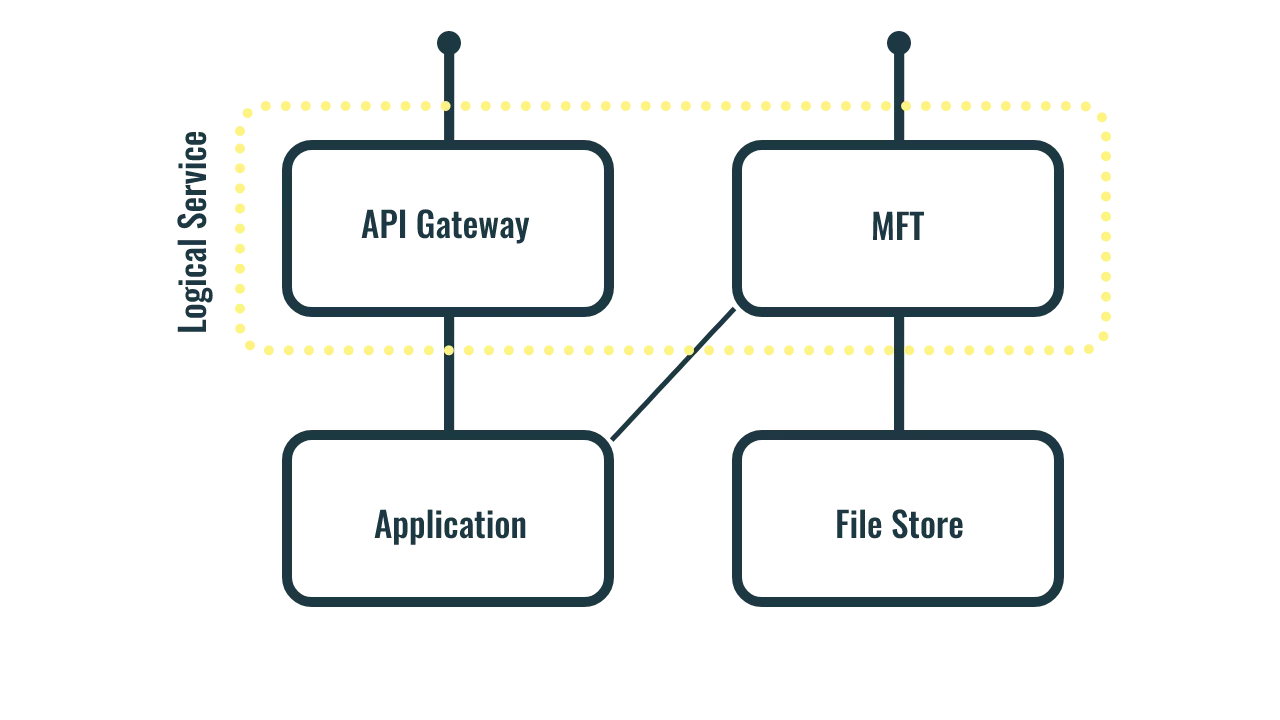

One possible solution is to integrate via two separate systems. It allows you to maximize the abilities of each, but also requires additional logic to couple the two systems. Clients would need to integrate with both the API gateway and the MFT solution, but the two systems would form one logical service. Ensure that permissions for files and the API are properly correlated to prevent security issues.

This approach offers a significant advantage as it eliminates the need to create an additional ingress point, thereby avoiding the requirement of setting up and maintaining extra infrastructure security.

Security

One of the main concerns when integrating two systems is security. Ensuring that the MFT and API correctly correlate user permissions is crucial. One way to achieve this is by securing both systems with the same protocol. For example, if your API is secured with OIDC, then using OIDC to secure your MFT makes it easy to manage permissions to files in relation to the API.

However, this requires the products to support the same technologies, which may be a challenge. SFTP, for example, does not support OIDC. For file exchange protocols that require support for OIDC, WebDAV may be the best option. However, any HTTP-based mechanism supported by your MFT solution could be suitable.

Lifecycle

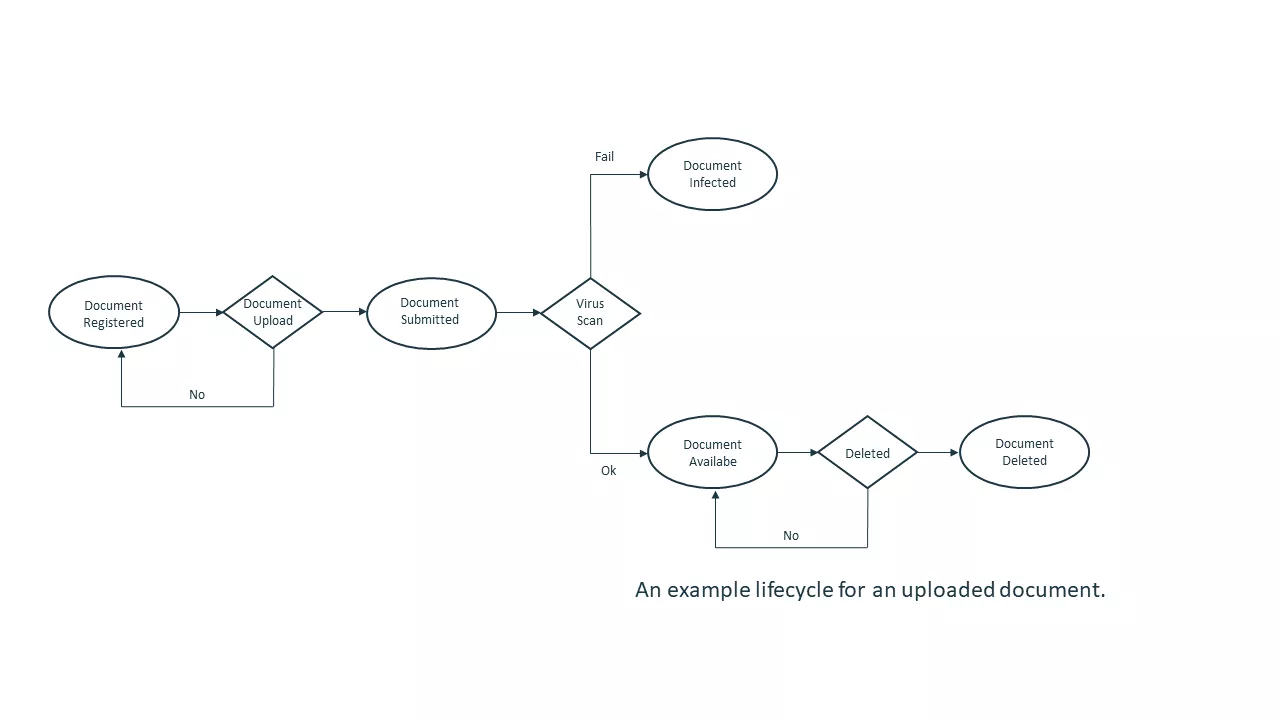

In order to ensure that the application and the MFT are working in tandem, even if the permissions models match, it is important to establish a link between the two. Because file and metadata calls happen separately, the application may not be aware of the file’s status. This opens up scenarios where users attempt to access a file before it is available (a type of race condition), or where the application is not notified of any action taken on the file (such as a failed virus scan).

To address these issues, a synchronization mechanism should be implemented between the two components. One approach is to define a document lifecycle that will manage this process. By implementing a document lifecycle, it will be possible to track the status of a file, from creation to deletion, and ensure that the application is informed of any changes to the file’s status.

Permissions

If files need to be shared with users other than the uploader, additional effort is required to manage permissions on the MFT platform. This ensures that collaborators can be added or removed as needed.

Conclusion:

Integrating file transfers with an API can be challenging, but using the dual system approach can help overcome technical limitations. While there are security and lifecycle considerations to keep in mind, this approach can maximize the abilities of each system without requiring additional infrastructure security. By using the same protocol to secure both systems and creating a synchronization mechanism, you can ensure that permissions are correctly correlated and that the application is aware of the file’s status.

Some more integration blogs for you

ALWAYS LOOKING FORWARD TO CONNECTING WITH YOU!

We’ll be happy to get to know you.